Best Practices for Data Enrichment

Key Takeaways:

Data is the backbone of modern business. But raw data, on its own, rarely tells the full story.

Data enrichment is what solves that problem.

It takes the incomplete records you already have and layers in the context, accuracy, and depth needed to make them genuinely useful: for sales, marketing, risk management, supplier vetting, and beyond.

And the need has never been more urgent. Poor data quality costs companies millions of dollars every year.

Done right, data enrichment directly reduces those losses.

That’s why this article walks through five core best practices that help teams enrich data in a way that is accurate, sustainable, and built for real-world use.

Enrichment adds to your data. It does not fix it.

And that distinction matters more than most teams realize.

When you enrich records that already contain duplicates, formatting inconsistencies, or missing key fields, the external data you append simply inherits those problems.

You end up with more data, but not better data.

Dr. Thomas C. Redman, the President of Data Quality Solutions, a company that helps other firms solve their data quality problems, explains why poor data is such a problem:

Illustration: Veridion / Quote: Harvard Business Review

So, before any enrichment begins, teams should audit their existing records.

That means:

Procter & Gamble is a good example of what proper data hygiene can unlock.

PG once struggled with fragmented internal data.

Multiple systems, including 48 separate SAP instances, created duplicate records and inconsistent product information, making reporting and operations inefficient.

To fix this, the company focused on cleaning and standardizing its master data. P&G introduced data quality tools, automated integrations, and centralized data management to synchronize records across systems.

As a result, the company reduced errors, removed hidden operational costs, and improved visibility across its supply chain.

Laura Becker, their President of Global Business Services, explained:

Illustration: Veridion / Quote: GS1

The lesson here is clear. P&G did not start by adding more data. They started by making their existing internal data reliable and clean.

Also, data hygiene should be treated as ongoing, not a one-time cleanup. So:

Enrichment works best when it operates on a stable, well-governed foundation, and that foundation has to be maintained regularly.

Even perfectly enriched data has a shelf life.

And in B2B environments, that shelf life is shorter than most teams expect.

People change jobs. Companies restructure, rebrand, or shut down. Leadership turns over.

Every one of these changes has the potential to make an enriched data point obsolete (often within months of being added).

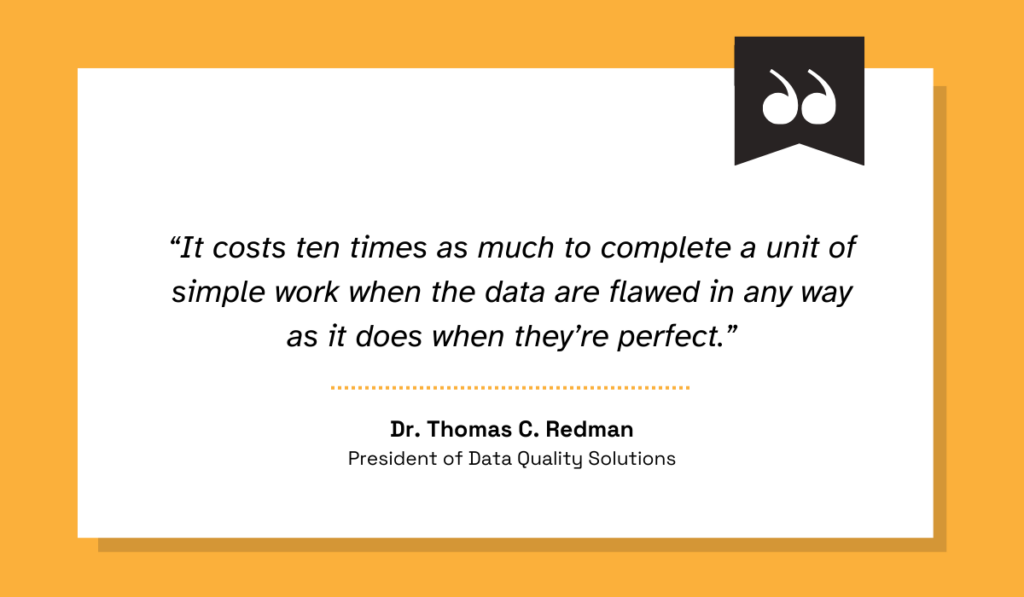

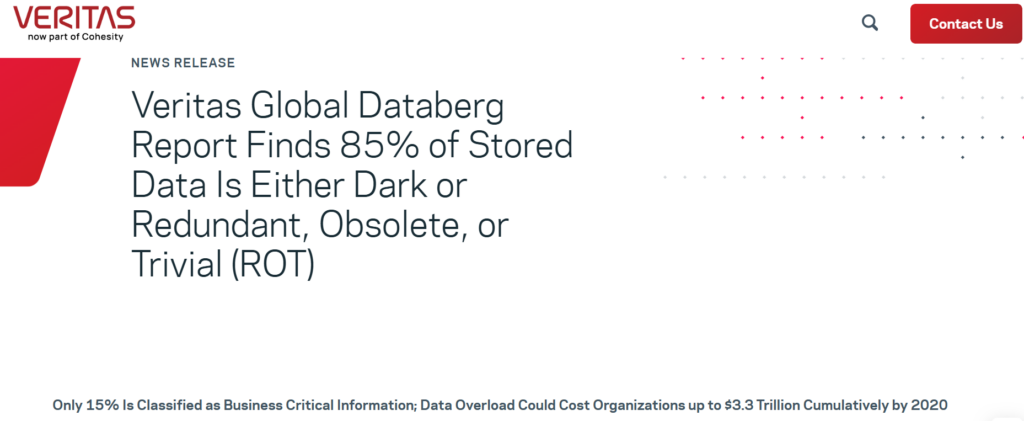

Per Veritas Global Databerg Report, almost 85% of stored data is either dark or redundant, obsolete, or trivial (ROT).

Source: Veritas

It honestly does explain the growing size of the data enrichment solutions market, which now sits above $2.3 billion!

Granularly, the numbers are equally shocking.

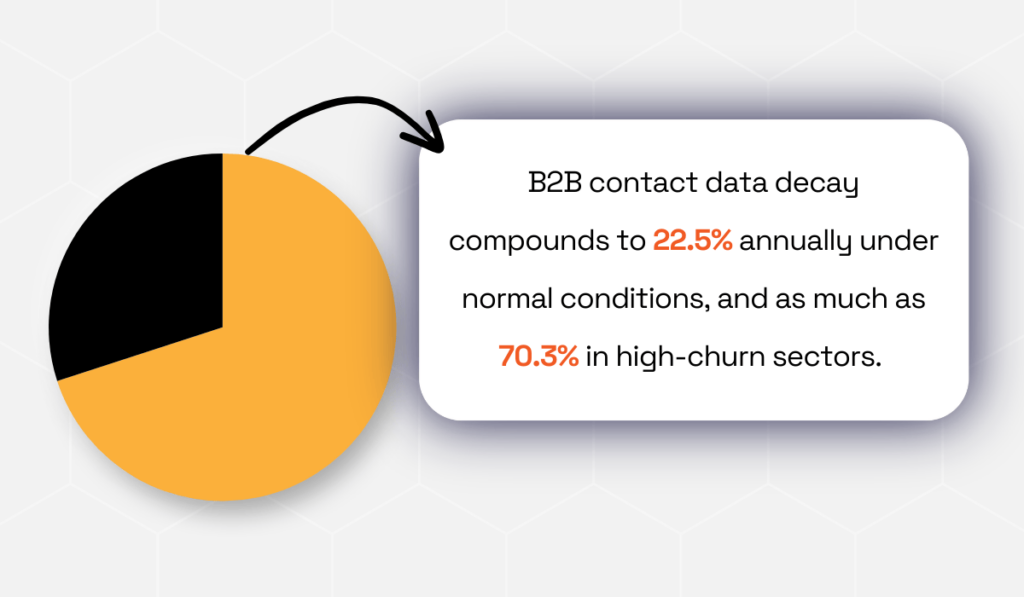

According to Gartner research, B2B contact data can decay by as much as 70.3% per year under certain conditions.

Illustration: Veridion / Data: Gartner

This is why the source of your enrichment data matters just as much as the enrichment process itself.

A provider that updates its database quarterly or worse, annually, will give you a false sense of completeness.

The data looks accurate. But by the time it reaches your CRM, a meaningful portion of it is already out of date.

So, the benchmark to aim for is continuous or weekly refresh cycles, especially for volatile data like contact information, firmographics, and operational status.

Providers should be able to explain their methodology for detecting company changes, not just tell you how large their database is.

When evaluating data enrichment vendors, ask specifically:

These answers reveal whether you are working with a living data source or an aging snapshot dressed up as current intelligence.

Veridion answers these questions transparently.

Trusted by teams across procurement, risk, insurance, and sustainability, Veridion is an AI-powered enrichment platform tracking over 134 million companies worldwide, including over 90% of SMBs that traditional providers routinely miss.

Source: Veridion

Each company profile spans 320+ attributes, updated weekly via a fully custom data pipeline that combines primary sources like company websites and legal registries with validated signals from news feeds, social media, and public filings.

With a rigorous validation process delivering over 95% accuracy, Veridion gives teams a fresh, reliable data foundation that makes enrichment strategies genuinely sustainable over time.

More data fields do not equal better decisions.

This is one of the most persistently misunderstood principles in data strategy and one of the most costly to get wrong.

Organizations that chase quantity over quality often end up with bloated records packed with attributes nobody uses.

Worse, the maintenance burden grows, and analysts spend more time filtering irrelevant data than acting on relevant insights.

Here’s how many companies have to deal with these problems:

Illustration: Veridion / Data: Harvard Business Review

So, the best approach is to identify which data points actually drive decisions for your specific use case and enrich only those.

What serves one workflow well may be entirely irrelevant to the other.

For instance, a team prospecting mid-market logistics firms needs very different enrichment attributes than a procurement team vetting industrial suppliers.

Unity Technologies, a publicly-traded video game software development company, is a telling example here.

In early 2022, the company disclosed that inaccurate data ingested into its machine learning pipelines had corrupted the advertising models its products depended on.

According to IBM’s analysis, the fallout came to approximately $110 million in lost revenue, tied to underperforming models and the cost of retraining corrupted datasets.

The failure here was not a lack of data. The failure was not ensuring that data met a quality threshold (accuracy in this case) before being used at scale.

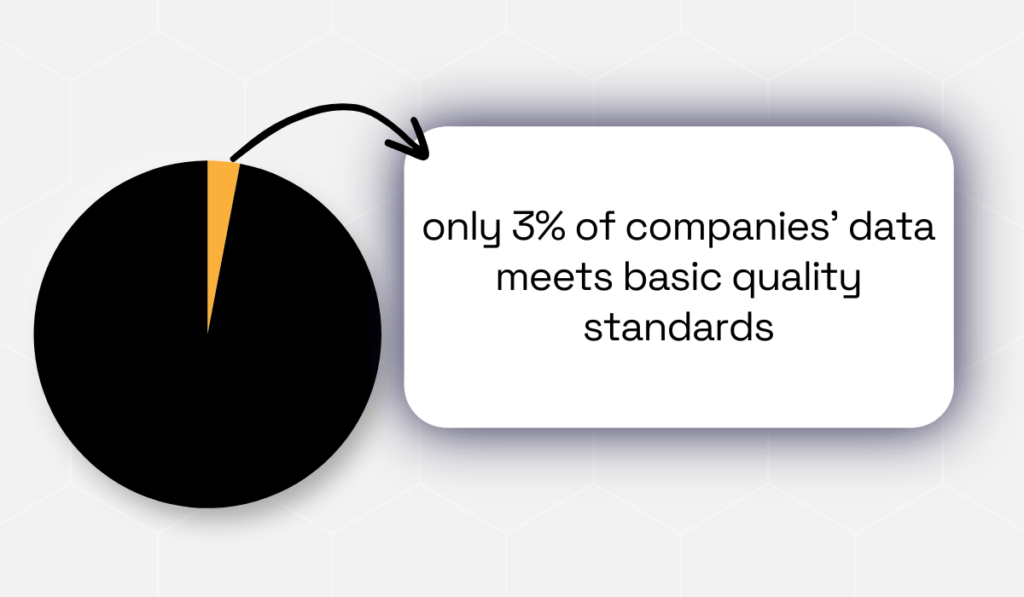

And the data quality problem is not just confined to a handful of companies. Gartner research shows that more than half don’t even measure it.

Illustration: Veridion / Data: Gartner

So, the takeaway here? Volume without validation is not an asset, but a liability.

In practice, prioritizing quality means setting clear standards before enrichment begins.

It also means being disciplined about saying no to unnecessary enrichment. Not every company profile needs 50 attributes.

Enriching only what matters keeps records clean, systems fast, and teams focused on using data rather than managing it.

Experian supports this, reporting that 75% of companies that worked on their data quality exceeded their business goals.

As a rule: enrich for purpose, not for completeness. That single principle eliminates most of the overhead that makes data programs fail.

Basic enrichment tells you what a company is. Multi-dimensional enrichment tells you how it operates, what it needs, and when to approach it.

The difference between the two is often the difference between a generic outreach and a well-timed, relevant one.

Most teams start with firmographics: company size, revenue, industry, and headquarters. That is a reasonable foundation.

But stopping there leaves significant value untouched. Organizations getting the most from enrichment are layering in:

The logic is simple.

A record that tells you a prospect is a mid-size logistics firm is a starting point. A record that also tells you they recently expanded their fleet and have been researching supply chain optimization separates you from competitors.

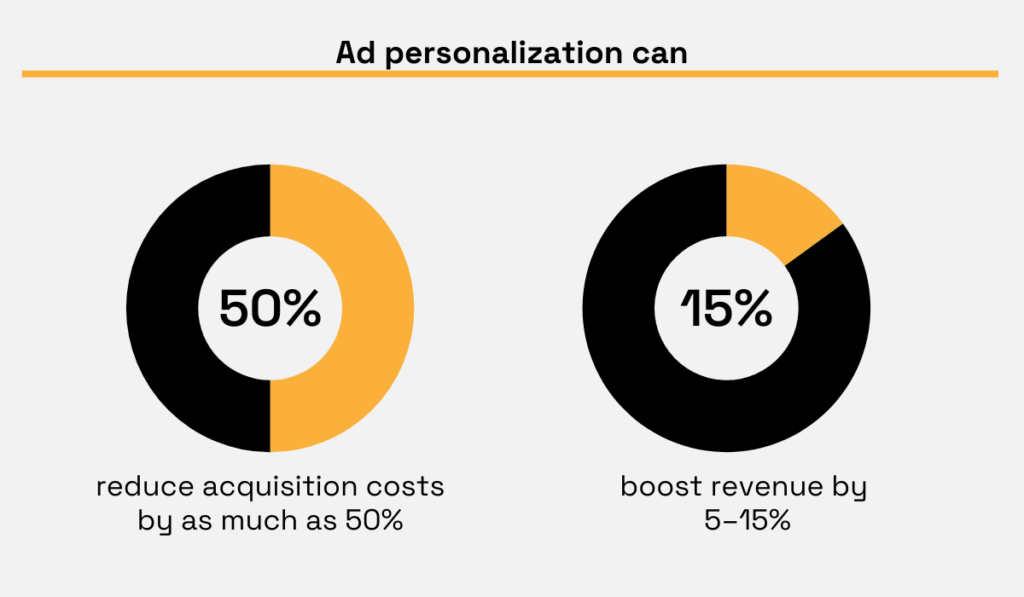

Companies that go beyond basic firmographics see it in the numbers, too.

According to Demandbase research, ad personalization powered by enrichment can reduce acquisition costs by as much as 50% and boost revenue by 5–15%.

Illustration: Veridion / Data: Demandbase

In procurement and supplier risk management, this approach is especially powerful.

Enriching supplier records with financial health signals, operational footprint data, corporate hierarchy details, and ESG compliance attributes gives procurement teams the full context they need to assess risk and make sourcing decisions with confidence.

The practical starting point is to map the decisions your teams need to make and work backwards. What context would make each decision faster or more accurate?

That question defines which dimensions of data enrichment are worth prioritizing and which you can safely skip.

Data enrichment does not happen in a legal vacuum.

Every time you collect, append to, or process data, especially when using third-party sources, you operate within a regulatory framework that carries real consequences for non-compliance.

GDPR in Europe, CCPA in California, LGPD in Brazil, and a growing set of national and sector-specific laws all define how data must be sourced, used, stored, and deleted.

The clearest recent signal of how serious regulators are?

In May 2023, the European Data Protection Board fined Meta a record €1.2 billion, the largest GDPR penalty ever issued, for unlawfully transferring European user data to the United States.

Source: Bitdefender

Since 2018 (when GDPR came into force), nearly €7.1 billion has been issued in fines.

This is a direct message to organizations worldwide: data-handling practices are under active scrutiny, and penalties are not symbolic.

For data enrichment specifically, compliance means understanding:

It also means vetting the compliance posture of any third-party provider you work with.

JPMorgan Chase, as one example, addressed the compliance risks of large-scale data sharing by building a governed data architecture centered on ‘data products.’

Each domain dataset is owned by dedicated teams responsible for its quality, usage, and regulatory obligations.

A centralized data catalog tracks access requests and usage, while strict controls prevent unauthorized copying.

This structure allows the bank to share data across the organization while maintaining strong auditability, regulatory compliance, and controlled access.

Ideally, when selecting an enrichment provider, compliance certifications should be non-negotiable.

Ask whether they hold SOC 2 Type II certification and if they are GDPR and CCPA compliant. Also, ask them to clearly explain how they source their data and how they handle opt-out and deletion requests.

The right mindset is to treat compliance not as a constraint on enrichment but as a design requirement.

Organizations that build it in from the start tend to end up with cleaner, more purposeful data because compliance forces precision about what you collect, why you collect it, and how long you keep it.

That discipline, in turn, improves every downstream use of that data.

Data enrichment, done right, is one of the highest-leverage investments a data-driven team can make.

But it requires discipline at every step: clean foundations, current sources, quality over volume, depth across dimensions, and compliance built in from the start.

Organizations that get it right do not just have better data. They have faster sales cycles, sharper targeting, more defensible risk models, and teams that spend their time acting on insight rather than correcting errors.

But done without structure, it compounds the problems it was meant to solve.

Remember, in a world drowning in data, the winners will be those who enrich wisely.